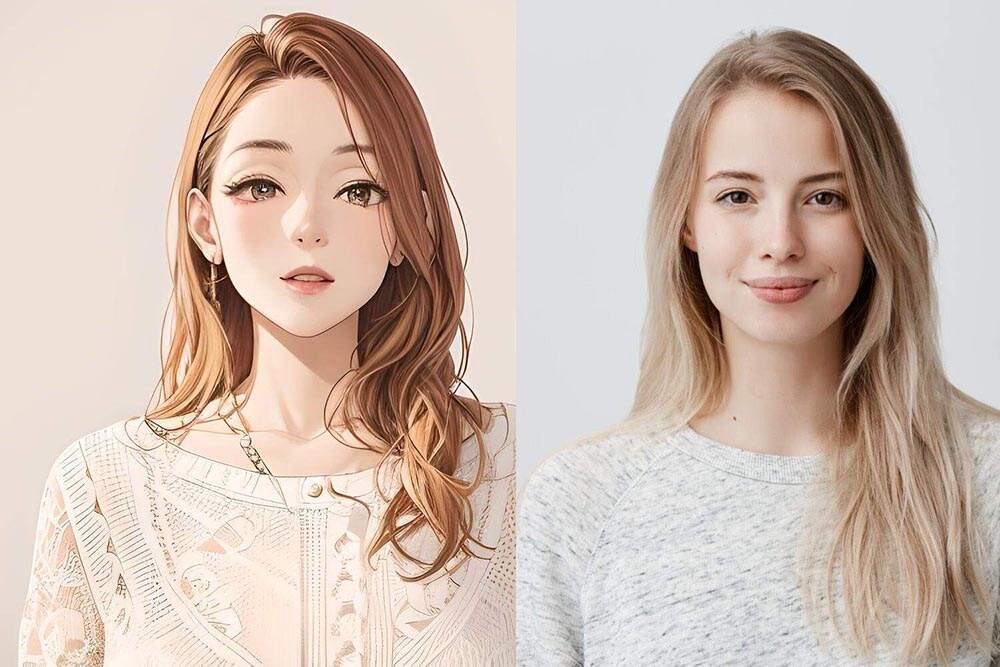

And there is a time you have such a crystal-clear image in the head. Picture it: silver hair, a coat caught in the wind, and those barely-glowing blue eyes. Then you open a blank canvas and your hands completely betray you.  That gap between imagination and execution is precisely why anime AI generators broke the internet. Such tools fail to consider the fact that you have failed to clear high school art class. In them, a text prompt — occasionally one that is quite oddly precise like "sad kitsune girl, cherry blossom rain, Studio Trigger style" — is regurgitated in less than three seconds that a freelance illustrator would have spent days creating. Occasionally, the output is genuinely gorgeous. Other times your character ends up with six fingers on one hand. But that's half the fun. So how exactly do these generators function? The majority of anime AI generators are trained on enormous collections of existing artwork. Millions of pictures — we are talking of millions — from all the traditional Miyazaki frames to Pixiv fan art uploaded at 2 AM by someone who lives on instant noodles and passion. It picks up on everything — the sweep of hair during battle, the warmth of diffused lighting, the iconic dinner-plate-sized eyes of shojo manga. The diffusion model — the newest technology powering much of this — works like this: you start with pure noise and the AI chips away at it, step by step, shaped by your prompt. Each step removes noise and adds structure. Think of it as darkroom photography, except the darkroom is a server rack of GPUs and the photographer has consumed every anime in existence. Key players have carved out their niches: NovelAI, Niji Journey (Midjourney's anime-focused mode), and SeaArt all serve different crowds. NovelAI leans heavily into the Danbooru tagging system — those tags function like a cheat code. Niji Journey leans casual, imprecise, and experimental by nature. SeaArt sits in between — approachable without demanding you write a dissertation just to get started. The thorniest problem in all of this? Character consistency. Tell most tools to generate your character a second time and you'll receive a stranger in the same clothes. For anyone attempting real narrative work or a comic series, this inconsistency is maddening. LoRA models changed everything. A LoRA, or Low-Rank Adaptation, allows you to train the generator on as few as 20–30 images of your character. The model remembers them after training. Imperfect, yes. But enough to keep your purple-eyed swordsman from inexplicably becoming a green-eyed accountant three panels later. Who exactly is behind all these generations? More people than you'd expect. Indie game developers with no art budget. Comic creators filling in placeholder panels with AI art while they finish the polished, hand-drawn versions. Authors who simply want to visualize their characters for the first time. Plus a full social media content pipeline churning out AI anime characters, which reads as either a business model or a distress signal, depending on who you ask. Some artists are angry — and not without reason. Enormous quantities of early training data were collected through unauthorized scraping. That's a substantive complaint, not mere defensiveness. The debate around attribution and compensation for AI-generated art is far from resolved. Far from it. Still, the tools are here. People are using them. Even professional artists are experimenting — using them for mood boards, client presentations, lighting references, and visual research that used to eat up hours. Crafting effective prompts is genuinely a learned craft. New users often don't grasp that hoping for a lucky result is like feeding random coordinates into a GPS and expecting a great restaurant recommendation. It'll navigate to something. Just not the thing you had in mind. Strong prompting has a recognizable shape: start with style (anime, detailed lineart, cel shading), then describe subject, mood, and lighting, and close with a negative prompt for what you want to avoid. Negative prompting is far more powerful than most beginners realize. Telling the model "no extra limbs, no text, no watermark" does more heavy lifting than people imagine. Iteration is the real game. Run eight outputs. Select the best. Feed it back as a reference. Run eight more. Think of it less as a button and more as a back-and-forth, except your collaborator only speaks in visuals. Where does the trajectory point? Video is the next frontier — and it's already begun. New tools can animate a character in anime style, complete with lip sync, subtle movement, and blinking. Quality is uneven, especially around hair and hands (the perennial weak spot for AI and human artists important site alike), but the direction is clear. Live generation is another frontier opening up. Some platforms already let you sketch a rough character outline and watch it transform into polished anime art as you draw. This isn't about replacing artists — it's closer to having a wildly fast, mildly chaotic creative collaborator. How you feel about all this probably depends on which side of the equation you're on. It's already in motion, and those thriving in this space long ago stopped debating it — they just kept creating.

That gap between imagination and execution is precisely why anime AI generators broke the internet. Such tools fail to consider the fact that you have failed to clear high school art class. In them, a text prompt — occasionally one that is quite oddly precise like "sad kitsune girl, cherry blossom rain, Studio Trigger style" — is regurgitated in less than three seconds that a freelance illustrator would have spent days creating. Occasionally, the output is genuinely gorgeous. Other times your character ends up with six fingers on one hand. But that's half the fun. So how exactly do these generators function? The majority of anime AI generators are trained on enormous collections of existing artwork. Millions of pictures — we are talking of millions — from all the traditional Miyazaki frames to Pixiv fan art uploaded at 2 AM by someone who lives on instant noodles and passion. It picks up on everything — the sweep of hair during battle, the warmth of diffused lighting, the iconic dinner-plate-sized eyes of shojo manga. The diffusion model — the newest technology powering much of this — works like this: you start with pure noise and the AI chips away at it, step by step, shaped by your prompt. Each step removes noise and adds structure. Think of it as darkroom photography, except the darkroom is a server rack of GPUs and the photographer has consumed every anime in existence. Key players have carved out their niches: NovelAI, Niji Journey (Midjourney's anime-focused mode), and SeaArt all serve different crowds. NovelAI leans heavily into the Danbooru tagging system — those tags function like a cheat code. Niji Journey leans casual, imprecise, and experimental by nature. SeaArt sits in between — approachable without demanding you write a dissertation just to get started. The thorniest problem in all of this? Character consistency. Tell most tools to generate your character a second time and you'll receive a stranger in the same clothes. For anyone attempting real narrative work or a comic series, this inconsistency is maddening. LoRA models changed everything. A LoRA, or Low-Rank Adaptation, allows you to train the generator on as few as 20–30 images of your character. The model remembers them after training. Imperfect, yes. But enough to keep your purple-eyed swordsman from inexplicably becoming a green-eyed accountant three panels later. Who exactly is behind all these generations? More people than you'd expect. Indie game developers with no art budget. Comic creators filling in placeholder panels with AI art while they finish the polished, hand-drawn versions. Authors who simply want to visualize their characters for the first time. Plus a full social media content pipeline churning out AI anime characters, which reads as either a business model or a distress signal, depending on who you ask. Some artists are angry — and not without reason. Enormous quantities of early training data were collected through unauthorized scraping. That's a substantive complaint, not mere defensiveness. The debate around attribution and compensation for AI-generated art is far from resolved. Far from it. Still, the tools are here. People are using them. Even professional artists are experimenting — using them for mood boards, client presentations, lighting references, and visual research that used to eat up hours. Crafting effective prompts is genuinely a learned craft. New users often don't grasp that hoping for a lucky result is like feeding random coordinates into a GPS and expecting a great restaurant recommendation. It'll navigate to something. Just not the thing you had in mind. Strong prompting has a recognizable shape: start with style (anime, detailed lineart, cel shading), then describe subject, mood, and lighting, and close with a negative prompt for what you want to avoid. Negative prompting is far more powerful than most beginners realize. Telling the model "no extra limbs, no text, no watermark" does more heavy lifting than people imagine. Iteration is the real game. Run eight outputs. Select the best. Feed it back as a reference. Run eight more. Think of it less as a button and more as a back-and-forth, except your collaborator only speaks in visuals. Where does the trajectory point? Video is the next frontier — and it's already begun. New tools can animate a character in anime style, complete with lip sync, subtle movement, and blinking. Quality is uneven, especially around hair and hands (the perennial weak spot for AI and human artists important site alike), but the direction is clear. Live generation is another frontier opening up. Some platforms already let you sketch a rough character outline and watch it transform into polished anime art as you draw. This isn't about replacing artists — it's closer to having a wildly fast, mildly chaotic creative collaborator. How you feel about all this probably depends on which side of the equation you're on. It's already in motion, and those thriving in this space long ago stopped debating it — they just kept creating.